Single Source of Truth, New Data Architectures, and Connected PLM

Connected is such a powerful word. We are connected in so many ways these days. Think about transformations that happened in many services surrounding us for the last decade. Our devices are continuously connected to the internet to give us access to the vital information we need all the time- starting from the weather forecast and road traffic and ending with the status of our purchases on Amazon and grocery delivery. What does it mean to industrial companies and PLM system architecture? How the PLM paradigm changes to support the “connectivity” between systems, organizations, and processes. I will try to answer these questions today.

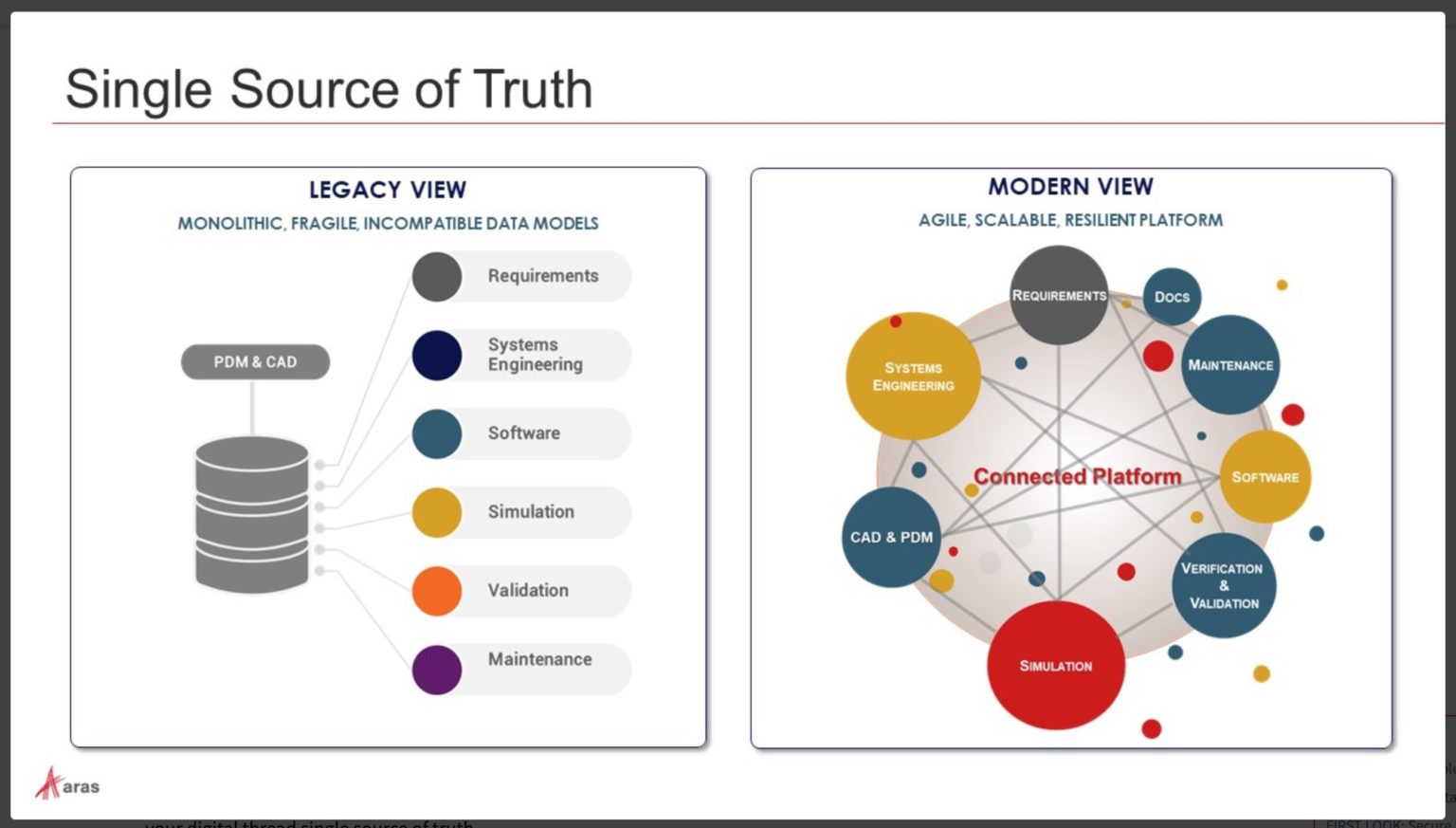

My attention was caught by Aras article 2020 A&D Wrap-up and Looking Ahead, which speaks among many other things about the modern view on a Single Source of Truth.

Here is an interesting passage:

The digital thread will keep on threading in 2021. The curious question is—when will people realize that just having data in a database does not make a digital thread? A digital string, yes—but it is still a silo! And when will there be a realization that shoe-horning data into a 30-year-old legacy data model is not the answer? Stock up on digital duct tape—you’re going to need it to keep things running. If you want to make a real change, we need to look at the product development process, the people, and the tools used. (If you don’t know why the people are important, go to any book about Kaizen or the Toyota Production System, and you will realize that the people involved are important.) With this view, we can understand how a firm works, how processes need to be executed, and the proper data structure to support the business’s needs.

I fully agree about the pointless approach of placing data in a single PDM database. But Aras picture doesn’t explain the architecture behind the connectivity of the connected platform. The name “Connected Platform” doesn’t explain well what is behind it. Is it still the same old fashion SQL database pulling all data strings together, or is it something different?

However, I see the future of connected data architectures as a fundamental goal for all PLM technologies. In my view, changing the PLM paradigm towards connected application will play a key role in the way SSoT will be morphed in the future. In my article I wrote a year ago – What is PLM Circa 2020, I shared some initial ideas of this transformation. Here is the passage:

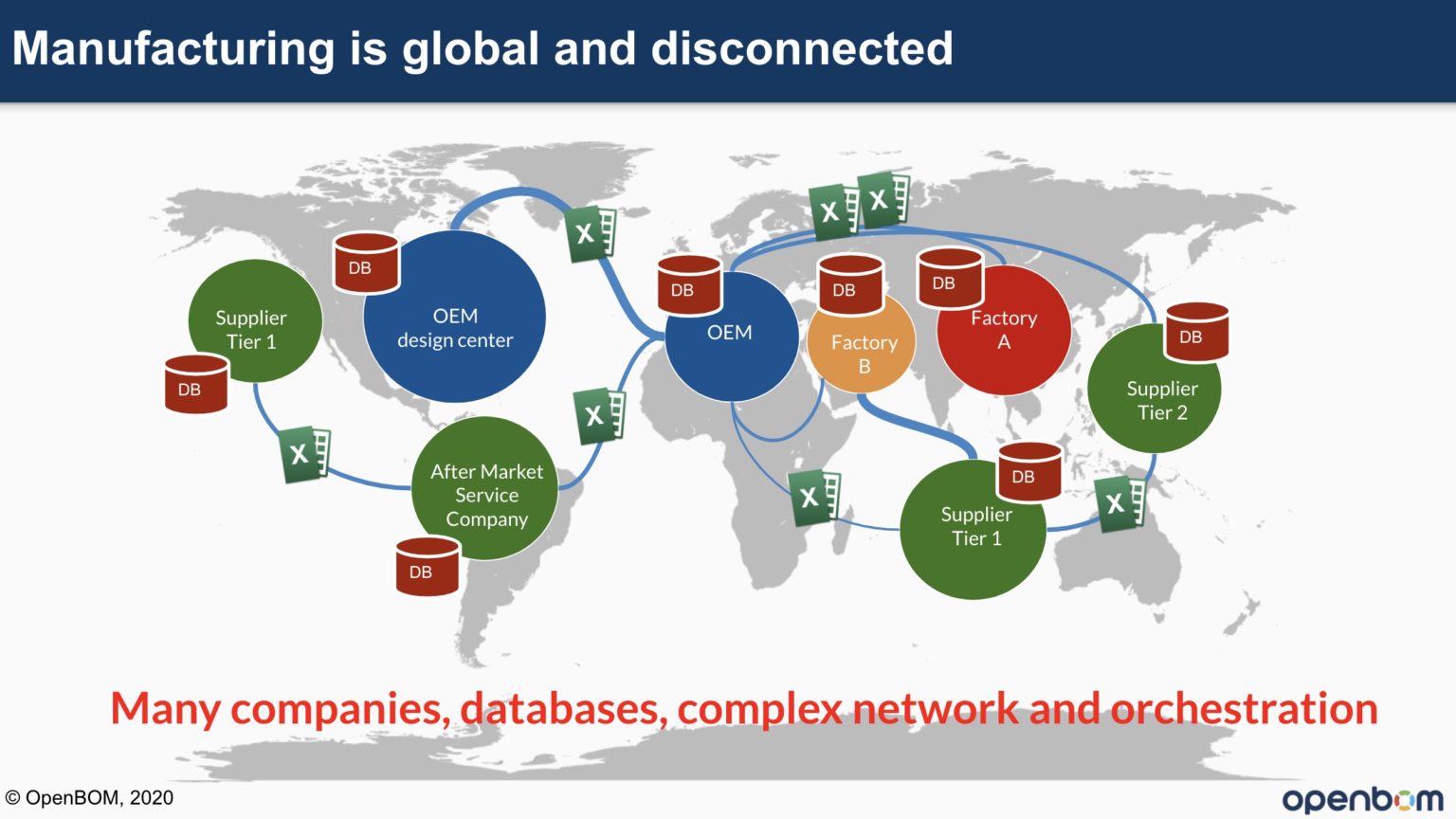

The previous generation of PLM systems was designed on top of an SQL database, installed on company premises to control and manage company data. It was a single version of the true paradigm. Things are changing, and the truth is now distributed. It is not located in a single database in a single company. It lives in multiple places, and it updates all the time. The pace of business is always getting faster. To support such an environment, companies need to move onto different types of systems – global, online, optimized to use information coming from multiple sources, and capable of interacting in real-time.

Isolated SQL-based architectures running in a single company are a thing of the past. SaaS makes the PLM upgrade problem irrelevant, as everyone is running on the same version of the same software. Furthermore, the cost of systems can be optimized, and SaaS systems can serve small and medium-size companies with the same efficiency as large ones.

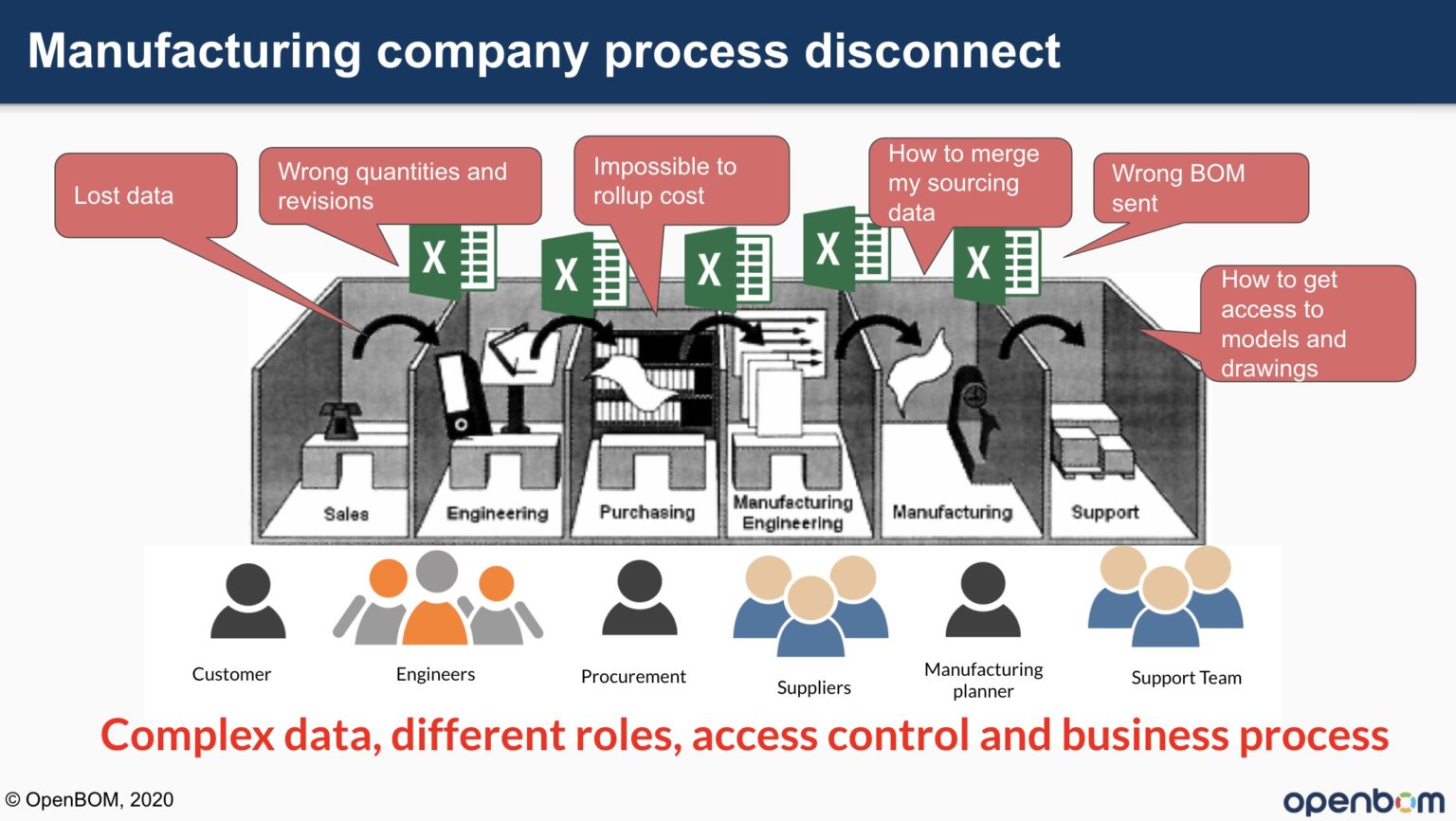

The manufacturing company environment was considered disconnected and compartmentalized by departments and options.

The paradigm of PLM Single Source of Truth (SSoT) was first introduced a few decades ago to solve the problem described in the picture above. As much as this is still a compelling paradigm, the idea of SSoT is starting to break when applied to the environment with many organizations. Take a look at this picture. How will all these companies connect? Will it require ONE BIG PLM DATABASE as suggested by some vendors, or will it require a new architecture?

The idea of SSoT needs to be accompanied by the new form – Single Version of Truth (SVoT). Lionel Grealou presented it clearly in his article – Single Source of Truth vs. Single Version of Truth.

SSoT is about data input optimization (integration, input/output synchronization), while SVoT is really about business analytics and reporting optimization (consolidation, alignment). In a nutshell, SSoT and SVoT principles do not contradict each other and can be either combined or considered independently for different purposes and business objectives. SSoT will appeal to data model architects and IT solution integrators, while SVoT will speak to data scientists and business intelligence architects. Achieving SVoT without SSoT is possible with a robust business analytics engine. However, it is much more challenging, and one can argue that it will not be sustainable in the long term. SSoT is a mandatory foundation to optimize data structures at source and minimize essential non-value added activities.

Such an approach to defining SVoT as an output of the connected systems can be compelling if combined with a modern PLM Data Architecture, which relies on polyglot persistence, multi-tenant applications, and microservice architecture. SSoT is a data foundation, which is morphed in a virtual and connected data represented in a specific tenant and user context. This is a powerful model, but it requires a fundamental rethinking of data architecture compared to what we have today in a traditional PLM SQL Database approach.

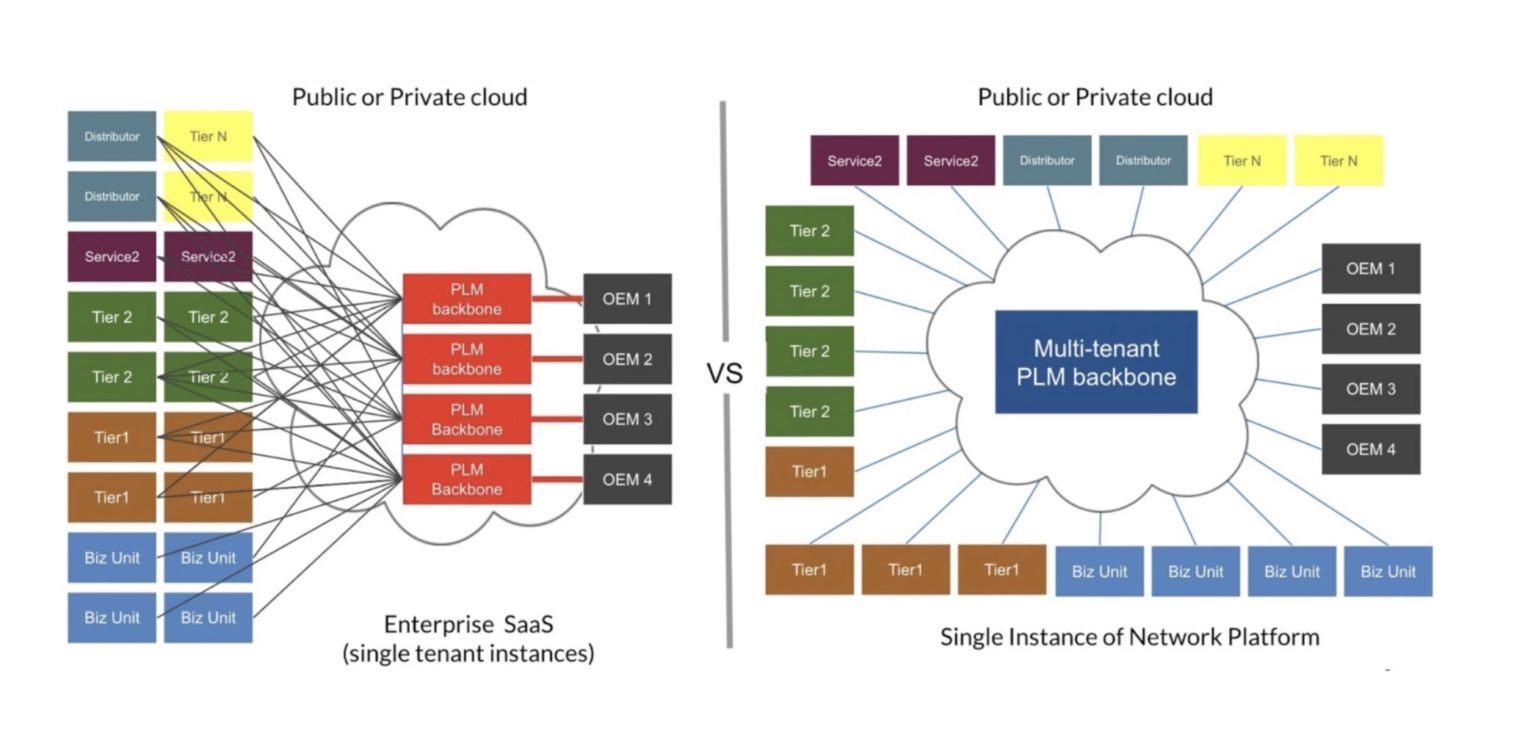

The fundamental idea of a new architecture is a network platform. The core foundation of such a platform is a networking layer that connects tenants and data, allowing to create of a powerful Single Version of Truth views to deliver data to the right people, the right organization at the right time. The picture below can explain how to differentiate between Enterprise SaaS hosting platforms and network platforms.

What is my conclusion?

The network platform is the next evolutionary step in PLM System Architecture that allows creating a layer providing access to the data independent from a single database (tenant). Such a platform uses a modern data architecture, including a polyglot persistence layer to manage data sources while optimizing the system’s output to present a specific tenant and role-based view for each consumer of the information. PLM vendors will have to re-think current SQL-based architecture and develop an evolutionary path towards new network platforms. I can see such development is happening now, but it might not be visible and not obvious yet. The foundation of such development is different for PLM vendors. Some of them are expanding current platforms, and some others buying new technologies. For multi-tenant SaaS applications, such new architecture is a native choice. We will see a major shift towards modern data architectures and new applications for the next several years, which will eventually become a new connected PLM architecture. Just my thoughts…

Best, Oleg

Don’t hesitate to contact Thanh for advice on automation solutions for CAD / CAM / CAE / PLM / ERP / IT systems exclusively for SMEs.

Luu Phan Thanh (Tyler) Solutions Consultant at PLM Ecosystem Mobile +84 976 099 099

Web www.plmes.io Email tyler.luu@plmes.io