Accelerating CFD With AMD 3D V-Cache™ Technology on Microsoft Azure | Simcenter

Feeding a big bunch of fans on game night

Have you ever wished you had your fridge right next to your sofa when watching a big game? Well, I firmly believe everyone, or at least every engineer, has had that thought before: “I don’t want to miss a big play… what if I could just pick up that cold drink now, without getting off the couch.” For any engineer it’s pretty obvious: This system needs to be optimized. We need a shortcut.

Now, some people have tiny fridges next to the sofa. This is convenient when a couple friends come over, but there is an issue with that. If you have a bigger bunch to feed, like a house party for the championship, that thing is just not offering enough capacity.

Why do I tell you this? Well, because it turns out that when CPU cores grab data to run big CFD simulations in Simcenter STAR-CCM+, it’s a bit like the fans grabbing drinks from the living room fridge to watch the big game.

Feeding the hungry crowd of cores on CFD game day

Simcenter STAR-CCM+ is a powerful CFD tool, but it requires powerful hardware because we have sophisticated users who run demanding multiphysics simulations. An AMD EPYC™ CPU has a big, hungry crowd of x86 cores, and when Simcenter STAR-CCM+ shows up for the game day, L3 cache is the living room fridge everyone is grabbing drinks from.

In technical terms, the way our software works, more cores and clock cycles are not enough on their own to deliver high performance. We need low-latency memory bandwidth, and we need lots of it. That’s why, when I learned how AMD was using 3D lithography technology to pack three times as much L3 cache into their powerful 7003 series CPU, I could see immediately how this would benefit our users, who rely on Simcenter STAR-CCM+ to provide them timely, accurate simulation results on high-performance computing (HPC) systems.

768 MB of L3 cache is a big deal. This is the big fridge right next to your sofa!

If the data that Simcenter STAR-CCM+’s powerful physics engine needs is not in cache, it must go all the way to RAM, which can take roughly an order of magnitude longer than a cache access—it’s like running to the garage to get a new case of drinks because the fridge ran out. This means those x86 cores in a user’s CPU sit around, twiddling their thumbs, waiting for data to come in so they can get on with the business of computation. And if Simcenter STAR-CCM+ is waiting, so is everyone else in the pipeline, from Design Manager to HEEDS to, ultimately, the user.

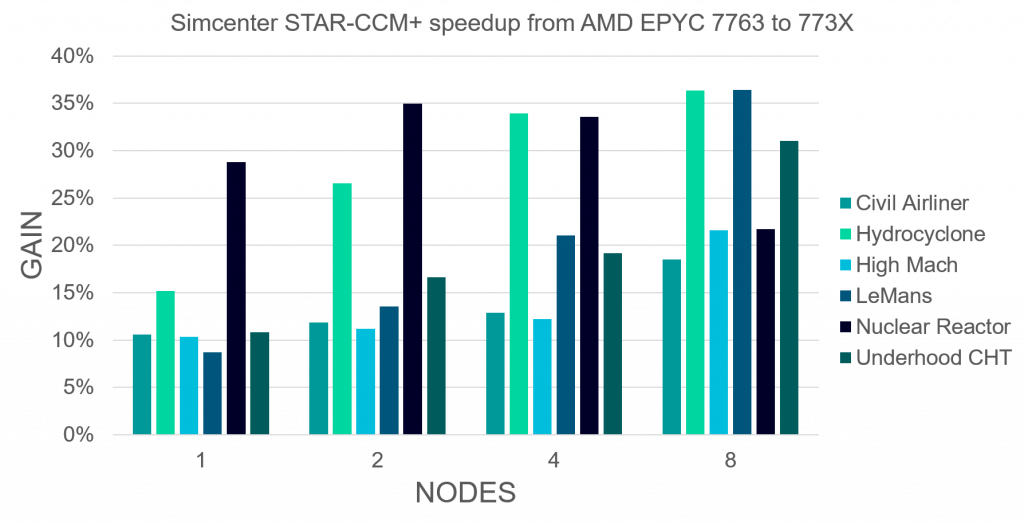

Accelerating CFD up to 50%

AMD 3D V-Cache™ technology means when Simcenter STAR-CCM+ needs to grab more data to compute fluxes and gradients, those cores don’t have to wait, bringing down the overall time to solution. This benefits our users across the board, accelerating a variety of Simcenter STAR-CCM+ multiphysics simulations by up to 50% over original AMD EPYC™ 7003 Series CPUs.

Now, a high-end server node has a pretty hefty price tag all on its own, and building an HPC cluster can easily cost millions of dollars. To make matters worse, the supply chain crisis affecting global businesses in 2022 has hit the computer industry especially hard, making it tough to get access to the latest hardware. I want every user to have access to cutting-edge HPC performance, and the democratization of HPC by cloud services, such as Microsoft Azure, makes that possible today. It’s like renting a bigger fridge just for the championship – no need to clutter up your room during the regular season.

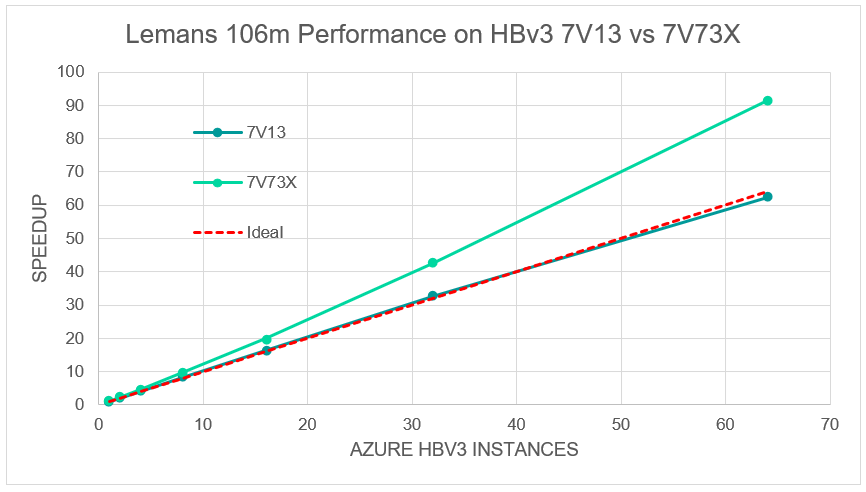

I’m a big fan of Microsoft Azure’s HBv3 virtual machines (VMs). They don’t just have powerful CPUs and tons of RAM; they also have the high-bandwidth, low-latency network backbone needed to deliver true HPC-class performance and scalability. With the launch of HBv3 VMs powered by 3rd Gen AMD EPYC™ processors with AMD 3D V-Cache™ technology, Simcenter STAR-CCM+ users, from the smallest consulting firm to a Fortune 100 company, can run their biggest simulations on the latest compute hardware at HPC scales.

Accelerating CFD at superlinear speedup? How cool is that?

Normally, we think of linear scaling as the ideal case, but as we see in our standard LeMans race car benchmark, those accelerated instances are better than the ideal! Accelerating CFD beyond linear, how is that even possible?

This is because as we split up the problem, AMD 3D V-Cache™ works more and more efficiently, unlocking more capability in the AMD EPYC™ 7003 Series processor, resulting in superlinear speedup. Our software runs up to 50% faster on these new instances as on the original AMD EPYC™ 7003 Series instances, meaning the user spends as little as half the time waiting for results.

There’s nothing more exciting than users running fast simulations with Simcenter STAR-CCM+. Almost nothing!

New computers are always exciting, and 3rd Gen AMD EPYC™ processors with AMD 3D V-Cache™ technology were practically tailor-made for Simcenter STAR-CCM+. I’m pretty excited about what this CPU can do, and I’m thrilled that Microsoft has made it so widely available via their Azure platform. Because really, there’s nothing more exciting than users running fast simulations with Simcenter STAR-CCM+.

Well, apart from watching your favorite team score a game-winning goal when the clock hits 0:00, maybe.

Don’t hesitate to contact Thanh for advice on automation solutions for CAD / CAM / CAE / PLM / ERP / IT systems exclusively for SMEs.

Luu Phan Thanh (Tyler) Solutions Consultant at PLM Ecosystem Mobile +84 976 099 099

Web www.plmes.io Email tyler.luu@plmes.io